Adversarial Exposure Validation (AEV) Guide: Prove Attack Paths

Adversarial Exposure Validation (AEV): Definition, Architecture, and CTEM Guide (2026)

Summary

Adversarial Exposure Validation (AEV) proves whether real attack paths are exploitable in your environment using execution evidence. It validates detection during execution and re-executes the same path after remediation to verify closure.

What Is Adversarial Exposure Validation (AEV)?

Definition: Adversarial Exposure Validation is the practice of executing attacker-like behavior to prove an attack path is exploitable in your environment, measuring detection, then re-running the same path after remediation to verify it stays closed.

Adversarial Exposure Validation (AEV) is a security testing discipline that answers one operational question: "Can an attacker chain what exists today into impact, and after we fix it, does the same path fail?"

That's the difference between enumeration and validation.

Most modern tools are excellent at detecting vulnerabilities, misconfigurations, and excessive permissions. The problem is that breaches usually happen when those signals chain into a path: a small misconfiguration + a permissive identity + an overlooked trust relationship + a detection blind spot, not from a single "critical" finding.

If testing is also periodic, if paths aren't re-evaluated as state changes, or if remediation isn't automatically re-validated, the program is still assumption-driven, even if it's branded as "continuous."

So when evaluating an AEV vendor ask them if they continuously prove path-level exploitability with execution evidence, or do they still score and report?

Why AEV Emerged as a Category

Adversarial Exposure Validation (AEV) emerged because the assumptions underlying traditional security assessments stopped matching modern environments.

Security assessment used to rely on a stable set of premises: infrastructure changed slowly, identities were relatively static, trust boundaries were explicit, and exploitability could be reasonably inferred from severity and configuration state. Vulnerabilities were evaluated largely in isolation, and the environment was assumed to look roughly the same at assessment time as it did during exploitation.

None of those premises hold in the new era of cybersecurity. Modern environments are dominated by identity, cloud control planes, SaaS integrations, and continuous deployment. Attackers exploit relationships between systems rather than individual flaws. They abuse permissions, tokens, trust policies, and weak detection boundaries far more often than single critical vulnerabilities.

Most real-world breaches are built by chaining low and medium severity conditions: an over-permissive identity here, a mis-scoped trust policy there, an exposed credential with weak detection, followed by lateral movement that never trips a signature. These paths are rarely visible in any single tool. In ephemeral systems, state changes faster than it can be reviewed. Point-in-time assessments assume that testing coverage can keep up with change. In CI/CD-driven environments, that assumption fails immediately.

This is why breach postmortems repeatedly reference "low-severity misconfiguration," "non-critical permission," or "accepted risk." The individual signals existed, but nobody proved whether they combined into a viable attack path under real constraints.

AEV formalizes the shift from predictive risk to empirical risk. Instead of assuming exploitability from severity or posture, AEV executes adversary behavior to prove whether a path to impact is feasible in the environment as it exists today and then re-executes after remediation to verify the path stays closed.

As the term has gained attention, many vendors have adopted the label without changing their execution model. Some repackage exposure scoring or posture dashboards as "AEV." Others run isolated simulations (single techniques, atomic BAS tests) that never prove a full path, never validate detection outcomes during execution, and never re-validate remediation.

AEV is about causality and closure: This condition enables this behavior, which produces this impact, and after remediation, the same behavior no longer succeeds. If a platform can't show you that loop end-to-end with execution evidence, it isn't validating exposure. It's describing it.

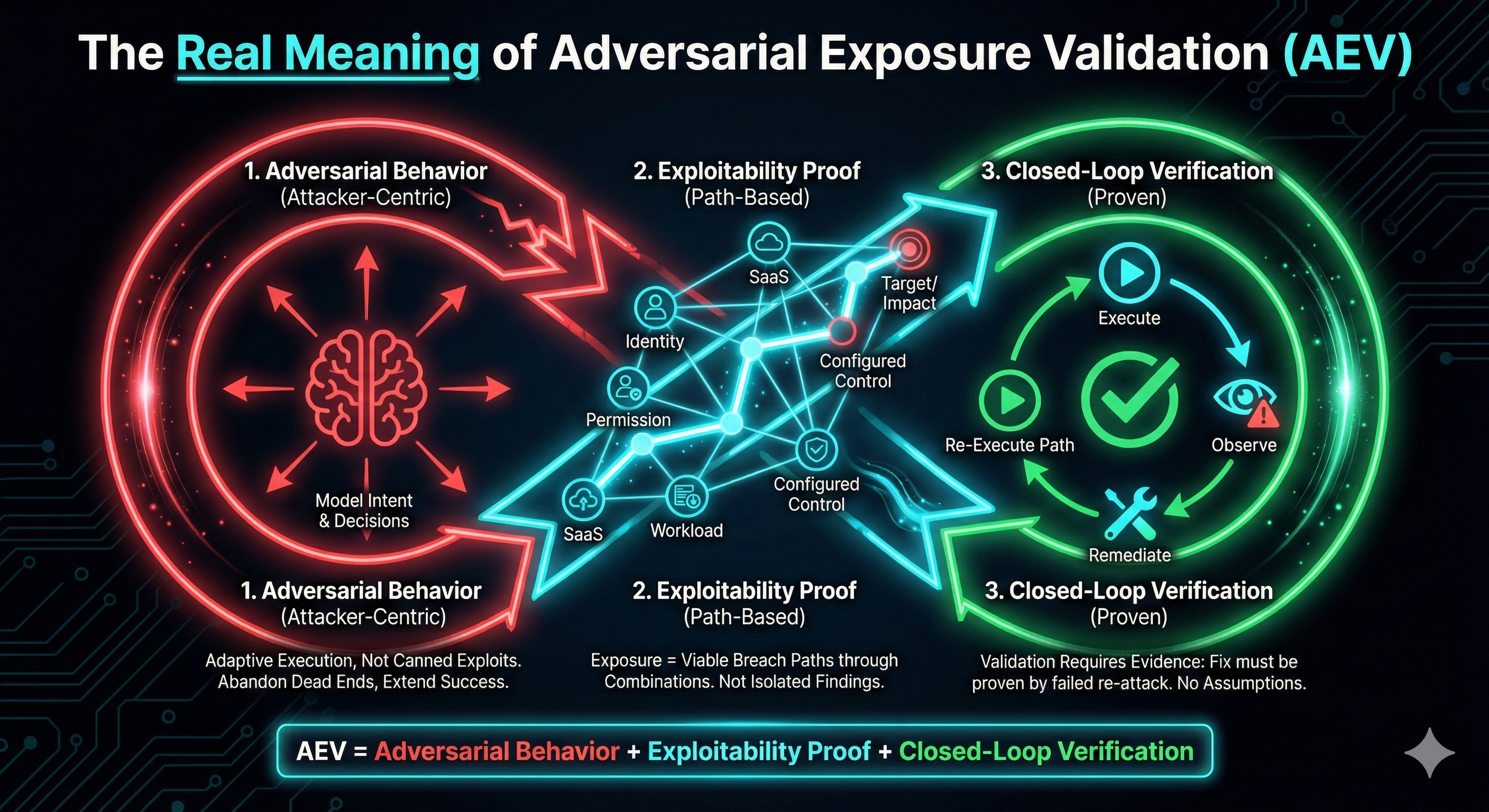

The Real Meaning of "Adversarial Exposure Validation"

Adversarial Means Attacker-Centric, Not Tool-Centric

In Adversarial Exposure Validation, "adversarial" does not mean running canned exploits or executing MITRE techniques in isolation. It means modeling attacker intent, constraints, and decision-making.

Attackers do not follow compliance frameworks. They do not care whether a control is "configured." They care whether it can be bypassed, chained, or ignored. AEV reflects this reality by executing behaviors that attackers actually use, observing system responses, adapting based on outcomes, and abandoning paths that do not yield progress. Paths that succeed are extended. This adaptive, outcome-driven execution is what separates adversarial validation from traditional control testing.

Compliance-aligned validation asks whether a control is present. Adversarial validation asks whether it can be bypassed, chained around, or ignored entirely.

This attacker-centric framing is what makes Adversarial Exposure Validation stand out from traditional security validation.

Exposure Is About Paths, Not Findings

In Adversarial Exposure Validation, an "exposure" is not a vulnerability, a misconfiguration, or an alert viewed in isolation.

An exposure is any condition that enables progress toward impact. Often, this condition is only meaningful when combined with others. A permissive IAM role may be harmless alone. A workload identity with overly broad access may appear low risk. A SaaS integration with implicit trust may seem operationally necessary. Individually, none of these trigger urgency. Combined, they form a viable breach path.

AEV treats exposures as graph problems, not checklist items. It evaluates how identities, permissions, infrastructure, applications, endpoints, and human workflows interact under adversarial pressure. Risk is not attached to a single node. It emerges from reachable paths through the graph.

This is why Adversarial Exposure Validation consistently surfaces risks that traditional enumeration misses: attackers exploit relationships, not line items. AEV focuses on how small weaknesses combine into real compromise paths.

Validation Requires Execution Evidence

Validation is one of the most overloaded and misused terms in security.

In AEV, validation requires execution. It is not enough to claim that something is exploitable, detectable, or fixed, it must be demonstrated by controlled adversarial action. Same thing if a detection works, it must be proven by observing alerts during real attack behavior, while if a remediation reduces risk, it also must be validated by re-executing the same attack path and confirming that it fails.

Validation is a loop: execute, observe, remediate, re-execute. Anything short of this loop is assumption. This requirement fundamentally changes how security effectiveness is measured. Success is no longer defined by coverage, severity, or configuration state, but by whether adversary behavior can still produce impact.

If exploitability, detection, or remediation effectiveness is inferred rather than proven, then validation has not occurred. This is why AEV fundamentally changes how security effectiveness is measured.

If the answer is inferred instead of proven, it is not validation.

The AEV Equation

Adversarial Exposure Validation (AEV) can be reduced to a simple but strict definition:

AEV = adversarial behavior + exploitability proof + closed-loop verification

Remove any one of these elements, and the system reverts to assumption-based security, regardless of how advanced the tooling appears. This is the distinction that matters in practice, and the reason Adversarial Exposure Validation (AEV) represents a real methodological shift rather than a rebranding exercise.

What a Real AEV System Must Do

A real Adversarial Exposure Validation (AEV) system behaves less like a scanner and more like an adversarial control system.

Its job is not to enumerate issues, but to continuously determine whether adversary behavior can produce impact in the environment as it exists right now. To do that, it must evaluate environmental state, select attack scenarios based on threat intelligence and asset criticality, execute adversary behavior safely, observe both system and human responses, and feed execution results back into prioritization and remediation workflows.

Crucially, a real AEV system treats remediation as a hypothesis to be tested, not an outcome to be assumed.

This is why AEV cannot be a point solution. It is an orchestration layer that connects adversary emulation, attack-path validation, detection and response measurement, and remediation verification into a single operational loop. Remove any part of that loop and the system immediately drifts back into assumption-based security.

How OFFENSAI Implements Continuous Cloud Validation

OFFENSAI aligns with the Adversarial Exposure Validation discipline because its design assumes that execution evidence is the only reliable signal.

Rather than starting from vulnerabilities or compliance controls, it starts from adversary behavior and works backward. It validates how identity, cloud permissions, applications, endpoints, and detection systems interact under attack conditions, and it re-tests those interactions after remediation.

From a security research and engineering perspective, this approach matters because it reduces uncertainty. It replaces abstract risk discussions with observable outcomes, which is ultimately what security leaders need to make decisions.

Core characteristics aligned with true AEV methodology include:

-

Adversary-mode testing based on realistic threat actor behavior

-

Cross-domain validation across identity, cloud, applications, endpoints, and human vectors

-

Exploitability-first prioritization grounded in execution evidence

-

Detection and response validation embedded in testing

-

Closed-loop remediation workflows with verification

-

Autonomous execution combined with human red-team oversight

This allows security teams that work with OFFENSAI to focus remediation on what is exploitable, not what is merely detectable.

AEV vs PTaaS vs BAS vs Bug Bounty vs Red Teaming

AEV is not a Penetration Testing as a Service (PtaaS), Breach and Attack Simulation (BAS), Bug Bounty as a Service (BBaaS), or Red Teaming. It changes how their outputs are used.

AEV is the validation layer: it proves an attack path is exploitable in your environment right now, and it re-executes the same path after remediation to verify it stays closed.

The others are valuable inputs to that loop:

-

PTaaS: discovers issues in a defined scope at a point in time

-

BAS: regression-tests controls/detections using known techniques

-

Bug Bounty: discovers novel vulnerabilities via researchers

-

Red teaming: runs realistic campaigns to test org readiness

-

AEV: validates exploitability continuously and verifies remediation by re-running paths

Why Penetration Testing as a Service (PTaaS) Is Not AEV, Even When It's Continuous or Automated

Short answer: PTaaS is discovery. AEV is validation.

Most PTaaS platforms, including automated or autonomous ones, operate within fixed scopes and predefined execution logic, triggered on a schedule or human-initiated workflow. They prove exploitability at a moment in time, and produce findings. Once the test ends, validation ends too.

AEV is tied to the current environment state, not test timing. When identity, cloud, or application state changes, or when remediation is applied, the same attack path is re-executed to prove it no longer works.

This difference becomes clearest in the questions each approach answers:

- PTaaS asks: "What can be exploited when we run this test?"

- Adversarial Exposure Validation asks: "What is exploitable right now, and is it still exploitable after we fix it?"

This is why PTaaS, regardless of cadence or automation, does not become AEV. So when evaluating "AEV" claims from PTaaS vendors, the key question is what environmental changes force the system to re-execute validation without human intervention.

Breach and Attack Simulation vs Adversarial Exposure Validation

Short answer: BAS validates controls. AEV validates exploitability.

Breach and Attack Simulation executes known attacker techniques at scale to test whether controls and detections trigger. Its strength is consistency. BAS is excellent for detection regression testing and control assurance, especially in large environments, where manual testing does not scale.

The limitation is that BAS is usually technique-centric, so tests run in isolation or shallow sequences. A technique may run successfully or be detected, but whether that activity can be chained into a real path to impact is often out of scope.

AEV is path-centric. It evaluates whether adversary behavior can be chained, adapted, and extended across identity, cloud, and application state to reach impact in the environment as it exists right now.

The difference is clearest during remediation:

- BAS assumes risk is reduced when controls block techniques.

- AEV re-executes the same attack path after remediation to prove attacker progress is no longer possible.

The questions each approach answers make this explicit:

-

BAS asks: "Do our controls detect known techniques?"

-

AEV asks: "Can an attacker still reach impact, and did our fix actually stop them?"

This is why BAS, even when continuous or AI-assisted, does not become AEV. If a system cannot prove that a full attack path fails after remediation, it is not Adversarial Exposure Validation.

Why Bug Bounty as a Service (BBaaS) Is Not Adversarial Exposure Validation

Short answer: Bug bounty finds bugs. AEV proves exposure.

Bug Bounty as a Service is a discovery mechanism, optimized for breadth and creativity. External researchers uncover unexpected vulnerabilities, edge cases, and business logic flaws that automated tools and internal teams often miss. This makes BBaaS extremely effective at discovery (uncovering low-signal issues that would otherwise go unnoticed).

What it doesn't provide is systematic validation. Bounty coverage is opportunistic and uneven, and it typically proves exploitability once, when a researcher reports it.

Even when BBaaS is described as "continuous," continuity refers to researcher availability, not to environmental validation. If identity permissions change, trust relationships evolve, or a fix introduces an equivalent path, nothing automatically re-evaluates exploitability. The exposure remains open until someone independently rediscovers it.

The difference becomes most visible after a fix:

- BBaaS assumes risk is reduced once a reported bug is resolved.

- AEV requires the original attack path to fail when re-executed.

The questions each approach answers make this explicit:

- BBaaS answers the question: "What vulnerabilities can external researchers find?"

- AEV answers a different question: "What attack paths are exploitable right now, and are they still exploitable after remediation?"

Why Red Teaming and Autonomous Red Teaming Are Not Adversarial Exposure Validation

Short answer: Red teaming tests realism in campaigns. AEV validates exploitability continuously.

Traditional red teaming is designed to stress-test how people, processes, and technology respond under adversarial pressure. Red teams operate with intent, creativity, and adaptability, often uncovering complex, high-impact attack paths that no automated system would find on its own. However, that depth is also the limitation.

Red team engagements are expensive, time-bound, and episodic by design. They cannot run continuously, or re-evaluate exploitability every time identity, cloud, or application state changes. Between engagements, exploitability is inferred. Remediation is typically accepted based on fixes and lessons learned, not on re-execution of the original attack paths.

Autonomous red teaming improves frequency by automating parts of execution. But in many implementations it's still scenario-driven: campaigns run periodically, then results are reviewed after the fact.

AEV is state-driven. When identity permissions shift, cloud trust changes, or remediation is applied, AEV automatically re-validates whether attacker progress to impact is still possible, and verifies closure by re-running the same path.

- Red teaming asks: "How would a skilled attacker compromise us in a realistic campaign?"

- AEV asks: "Can attackers still reach impact right now, and did our fixes actually stop them?"

Red teams, human or autonomous, are critical inputs into an AEV program. They are ideal for high-impact scenario validation and organizational readiness testing. But they do not replace AEV, because they cannot run continuously, eliminate blind time, or enforce closed-loop verification in a constantly changing environment. While red teaming provides realism, AEV provides proof.

KPIs That Matter in Adversarial Exposure Validation

AEV effectiveness cannot be measured with traditional vulnerability metrics. Counts, severities, and coverage percentages do not reflect attacker feasibility.

Instead, meaningful AEV metrics focus on outcomes.

The number of exploitable attack paths indicates how many viable routes to impact currently exist, independent of how many findings are reported. Mean time to detect adversarial activity reflects whether detection systems respond under realistic attack conditions, not lab scenarios. Mean time to remediate validated exposures measures how quickly the organization can eliminate proven risk, rather than how fast tickets are closed.

Equally important is the percentage of remediations that are successfully verified. This metric exposes how often fixes actually eliminate exploitability versus merely changing configuration state. Residual exposure over time captures whether risk is genuinely decreasing or simply shifting as the environment evolves.

Key AEV KPIs:

-

Exploitable attack paths (count + trend)

-

Mean time to detect (MTTD) validated adversary behavior

-

Mean time to remediate (MTTR) validated exposures

-

Remediation verification rate (percentage of paths that fail after fixes)

-

Residual exposure over time (does exploitability actually decrease?)

Conclusion

Adversarial Exposure Validation is not a feature, a scan, or a dashboard. It is a methodological shift. It is a practical, adversary-centered approach to proving whether security exposures are exploitable and whether defenses work in real-world conditions.

As the category matures, the distinction between tools that claim AEV and systems that actually practice it will become obvious. The difference is not branding. It is evidence.

If you remember one buying question, make it this: "Show me the same attack path failing after we fix it."

FAQs

What problem does Adversarial Exposure Validation actually solve?

Adversarial Exposure Validation solves the problem of false prioritization in security. Most security programs know what they have (assets, vulnerabilities, misconfigurations), but cannot reliably determine which exposures can be exploited in practice, under current conditions, by a real adversary. AEV replaces inferred risk with execution evidence. Instead of assuming that a vulnerability, permission, or misconfiguration is dangerous, AEV proves whether it can be used as part of a real attack path and whether existing controls stop it. This eliminates theoretical noise and allows teams to focus remediation effort on exposures that actually create breach paths.

How is AEV different from vulnerability management or exposure management?

Vulnerability and exposure management focus on enumeration and prioritization. AEV focuses on validation. Traditional exposure management aggregates signals, like CVEs, misconfigurations, identities, attack surface data, and assigns risk scores. These scores are inherently predictive. They assume exploitability based on severity, context, or heuristics. AEV moves beyond prediction by executing adversary behavior against the environment as it exists. It determines whether exposures can be chained, whether lateral movement is possible, whether privileges can be abused, and whether defenders detect and respond. The output is not a score, but evidence of exploitability and impact.

Is AEV just a new name for breach and attack simulation (BAS)?

No. BAS is a component; AEV is the outcome. BAS platforms execute predefined attacker techniques to test controls at scale. However, BAS often runs techniques in isolation and does not validate end-to-end attack paths, business impact, or remediation effectiveness. AEV may use BAS as an execution mechanism, but it requires additional capabilities: attack path discovery, exploit chaining, detection and response measurement, remediation verification, and continuous orchestration. Without these elements, BAS remains control testing rather than exposure validation.

How does AEV differ from penetration testing?

Penetration testing is episodic and discovery-oriented. AEV is continuous and validation-oriented. Pen tests excel at uncovering novel vulnerabilities, business logic flaws, and high-skill attack paths. However, they are point-in-time assessments and cannot keep pace with modern cloud, identity, and CI/CD environments that change daily. AEV complements penetration testing by continuously validating whether known and newly introduced exposures are exploitable, whether controls degrade over time, and whether remediation actually reduces risk. In mature programs, penetration testing becomes a targeted input into AEV rather than a standalone activity.

What makes an exposure "validated" in AEV terms?

An exposure is validated only when four conditions are met. First, the exposure is exercised using adversary-realistic behavior rather than theoretical modeling. Second, it is shown to be exploitable, either directly or as part of a chained attack path. Third, detection and response mechanisms are tested to determine whether the activity is observed and acted upon. Fourth, remediation is applied and the attack path is re-tested to confirm it no longer works. If any of these steps are skipped, validation has not occurred.

Why is the exploit chain central to AEV?

Modern breaches rarely rely on a single critical vulnerability. They emerge from combinations of low-to-medium severity weaknesses across identity, cloud permissions, SaaS integrations, endpoints, and human workflows. AEV treats exploit chaining as a first-class concern. It evaluates how permissions enable lateral movement, how misconfigurations enable persistence, and how weak detections allow attackers to move quietly across domains. Without chaining, exposure analysis systematically underestimates real risk.

How does AEV validate identity-based attacks?

Identity is the dominant attack surface in modern environments, and AEV reflects that reality. AEV evaluates identity exposures by validating privilege escalation paths, token misuse, stale credentials, misconfigured trust relationships, and role chaining across cloud and SaaS platforms. Rather than flagging excessive permissions in isolation, AEV proves whether those permissions can be abused to reach sensitive assets or actions. This is critical because identity attacks often bypass traditional perimeter and malware-focused controls.

Does AEV generate real exploits in production environments?

AEV generates controlled, safety-bounded execution evidence, not destructive exploitation. Validation uses techniques such as limited privilege actions, non-destructive payloads, canary operations, and synthetic impact markers to prove exploitability without causing harm. Mature AEV platforms enforce blast-radius controls, approval workflows, and environmental awareness to ensure production safety. The goal is to validate feasibility, not to cause disruption.

How does AEV measure detection and response effectiveness?

AEV measures detection and response by observing how security telemetry behaves during adversary execution. This includes whether alerts fire, whether signals are correlated correctly, how long detection takes, whether alerts reach analysts, and whether response actions are triggered or delayed. Unlike purple-team exercises that are often manual and ad hoc, AEV provides repeatable measurement over time. Detection that is never exercised cannot be trusted.

Why is remediation verification critical in AEV?

Because unverified remediation is indistinguishable from assumed remediation. In many organizations, exposures are marked as "fixed" based on configuration changes or ticket closure, without re-testing the original attack path. AEV explicitly re-executes adversary behavior after remediation to confirm that exploitability has been eliminated and that compensating controls work as intended. This prevents risk from re-appearing silently and ensures that effort translates into measurable exposure reduction.

How does AEV fit into a CTEM strategy?

AEV is the validation pillar of Continuous Threat Exposure Management. CTEM defines how exposures are identified, prioritized, and addressed over time. AEV ensures that prioritization decisions are correct by proving which exposures are exploitable and whether remediation is effective. Without AEV, CTEM remains a planning and reporting framework rather than a risk-reduction engine.

Is AEV a Gartner category, or is it still emerging?

AEV is an emerging category driven by operational necessity rather than marketing invention. As environments become more dynamic and attackers more adaptive, organizations can no longer rely on static assessment models. AEV formalizes practices that advanced security teams have been converging on: continuous adversary emulation, exploit chaining, detection validation, and remediation verification. This convergence is why AEV is increasingly discussed alongside CTEM, autonomous red teaming, and exposure validation in analyst research.

What should buyers look for when evaluating AEV platforms?

Buyers should focus on execution capability rather than dashboards. Key indicators include the ability to emulate real adversary behavior, validate multi-step attack paths, measure detection and response, enforce safe execution controls, and re-test remediation automatically. Platforms that emphasize scores, posture summaries, or static mappings without execution evidence are not delivering AEV.

Why is AEV especially important for cloud and SaaS environments?

Cloud and SaaS environments change faster than humans can audit. Permissions shift, services are redeployed, trust relationships evolve, and identity becomes the primary control plane. AEV continuously validates that these changes have not introduced new exploit paths and that security controls remain effective despite constant drift. Static reviews cannot keep up with this rate of change.

What is the biggest misconception about Adversarial Exposure Validation?

The biggest misconception is that AEV is a tool category. AEV is a discipline. It requires changes in how security teams think about risk, success metrics, and remediation. Tools can enable AEV, but without adversary-centric thinking, closed-loop validation, and operational ownership, the practice fails.

Why organizations adopting AEV reduce breach risk faster?

Organizations that adopt AEV stop debating theoretical severity and start acting on evidence. They remediate fewer issues, but the right ones. They improve detection based on real attacker behavior. They verify fixes instead of assuming success. Over time, this compounds into faster response, lower residual exposure, and fewer surprise breaches.